Why model selection is an architectural decision, not a benchmark competition

Every CTO who reviews foundation models for AI agents makes the same mistake. They chase benchmark scores instead of production reliability.

Right now, engineering leaders are comparing Claude Opus 4.6 (launched February 5, 2026) against GPT-5.2, scanning spec sheets for the highest numbers. Opus 4.6 hit 65.4% on Terminal-Bench 2.0. GPT-5.2 dominated coding leaderboards for months. The instinct is to pick the winner and move on.

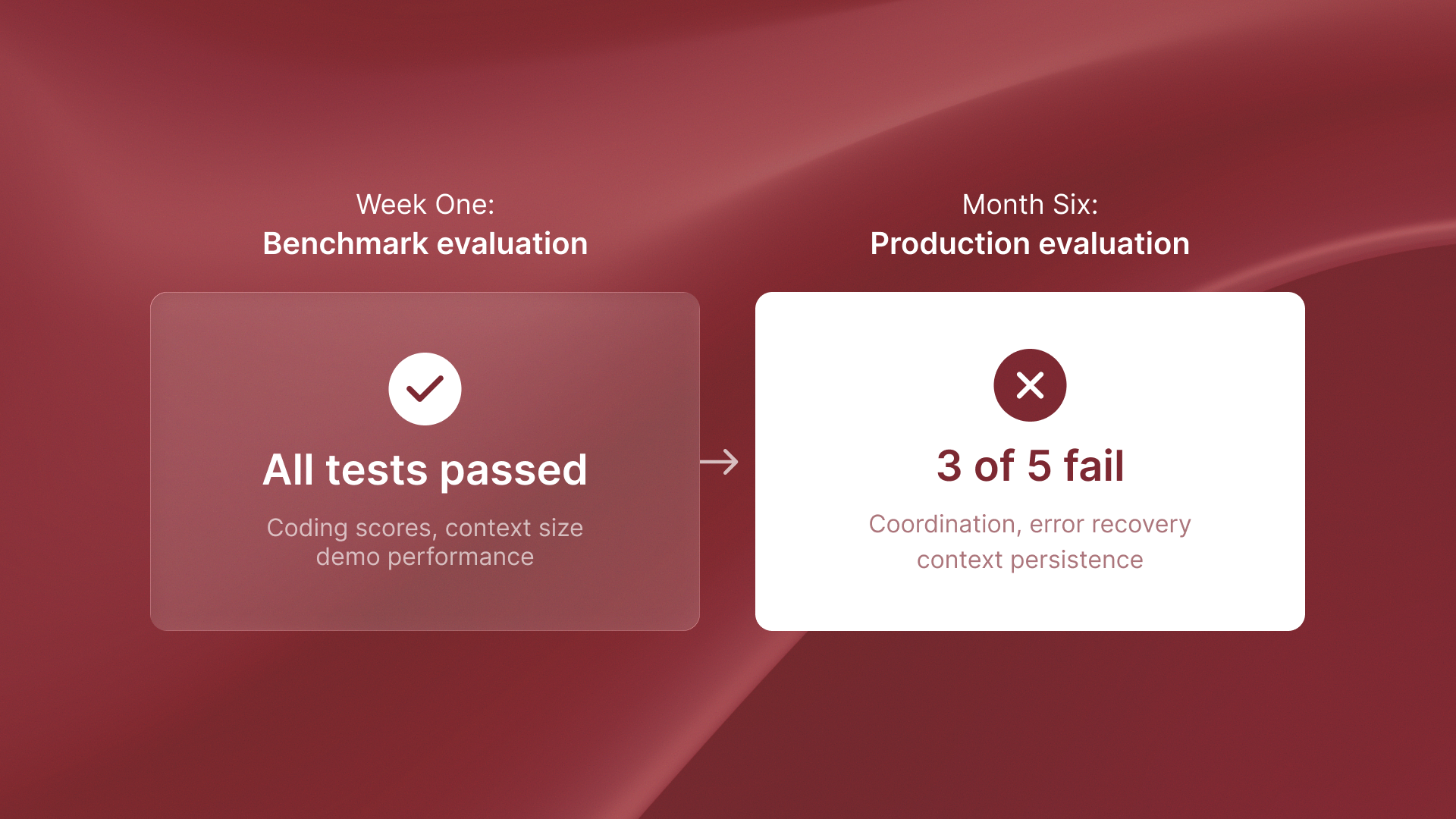

But here's what actually happens: 70% of those pilots never reach production. The model you pick from week-one benchmarks can decide if you rebuild your whole agent architecture by month six. This isn't about Claude vs OpenAI fanboyism. It's about understanding which model characteristics predict production viability versus demo impressiveness.

The benchmark trap

Most AI model comparisons measure isolated capabilities. Code completion accuracy. Math problem solving. Question answering on academic datasets. These benchmarks tell you how models perform on synthetic tasks in controlled environments.

Production autonomous agents need something different: they must reason through many steps for weeks. They must keep context across sessions. They must recover from errors without human help. They must also coordinate with other agents. Terminal-Bench 2.0 actually measures this. It tests full workflows - requirement interpretation, environment setup, execution, error recovery - not just code snippet generation. Opus 4.6’s 65.4% score shows real autonomous task completion. That is why it matters more than generic coding benchmarks.

Deloitte found only 30% of gen AI pilots reach production. The gap exists because teams choose models during demos, when benchmarks look impressive. They do not choose them during long production runs, when context limits, token costs, and failures matter.

What actually matters for production agents

Claude Opus 4.6 scored 76% on MRCR v2 long-context retrieval compared to its predecessor Sonnet 4.5's 18.5%. This isn't incremental improvement. It’s the difference between agents that can reason about whole system architectures. Versus agents that change single functions in isolation.

Our experience with QA flow and ReachSocial revealed this pattern repeatedly. Models scored 2–3% higher on isolated coding benchmarks. But they lacked persistent context and multi-agent orchestration features. This created architectural debt. It forced complete rewrites when scaling from POC to production.

The Opus 4.6 agent teams feature supports new architectural patterns. It uses specialist agents and a coordinator.These patterns are not possible with GPT-5.2 single-model API. This fundamentally changes what autonomous systems you can build. It's not about raw performance. It's about whether the model's architecture supports the agent coordination patterns production systems require.

The month-six test

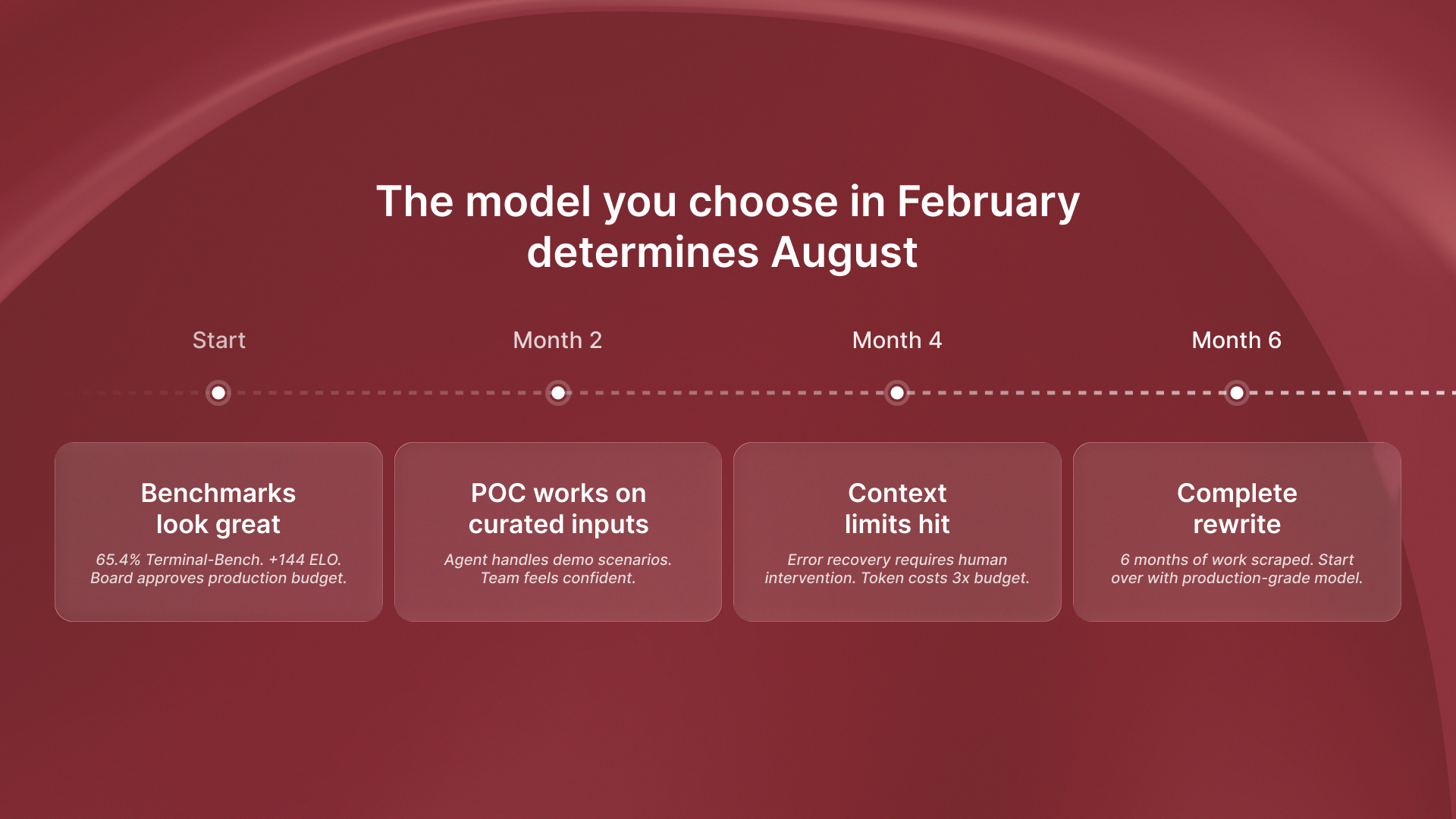

Here’s the strategic point: the model you choose in February 2026 may force you to rebuild your agent system in August 2026.

Engineering teams selecting models based on coding benchmarks will hit context limits and coordination problems that require fundamental rewrites. A model may demo well, but it may not keep context across multi-day workflows. If it can’t coordinate between specialist agents, you must build workarounds. Those workarounds often become technical debt.

Teams selecting based on production reliability characteristics - context handling, agent orchestration, error recovery - will scale from POC to production without architectural rewrites. When QA flow chose its foundation model, the decision wasn't about which scored highest on HumanEval. It was about which could maintain test context across entire codebases and recover from edge cases autonomously.

Model selection isn't a reversible decision when you've built six months of agentic workflows on top of it. Choose for month-six production, not week-one demos. The companies making this architectural choice in early 2026 will not need to rebuild their agent stack in Q3.

.png)

.svg)

.svg)