Why 92% of teams use AI tools but still follow 2015 workflows

Your engineering team adopted AI coding tools. Your workflows didn't. That mismatch is costing you more than you think.

92% of US developers now use AI coding tools, according to DesignRush 2025. Globally, adoption jumped from 76% to 84% in just one year. The result: 41% of worldwide code is now AI-generated. Yet most engineering teams still use workflows designed for manual coding in 2015. This creates a dangerous paradox where AI accelerates code production while legacy processes become the bottleneck, accumulating technical debt at unprecedented scale.

Here's what breaks: teams who redesign their development lifecycle around AI capabilities now will compound advantages for years. Those who don't will find themselves buried under validation debt they can't clear.

The volume shift no one talks about

AI tools generate code 10x faster than humans write it. That sounds like pure upside until you realize your code review process, tests, and deployment gates were built for human-paced work. When 41% of your codebase is AI-generated, traditional architecture patterns collapse under the volume.

The bottleneck has shifted from writing code to validating it at scale. Your CI/CD pipeline wasn't designed to handle this throughput. Your manual QA process can't keep pace. Your code review workflow creates merge conflicts that compound daily. This is the invisible fragility that high AI adoption without workflow transformation creates.

We've seen this pattern across our portfolio. Teams first added AI tools to 2015-era workflows and saw productivity gains. Later, they hit a wall because validation became the main constraint. The competitive advantage goes to teams who rebuild their entire development lifecycle around AI-native workflows.

What AI-Native development actually requires

Traditional workflows assume humans write code, humans review it, and humans define test cases. AI development inverts this. Autonomous systems like QA flow generate and execute test cases from design specs, eliminating the validation bottleneck. The architecture shift is fundamental, not incremental.

Here's the framework: redesign around three layers. First, autonomous testing that validates AI-generated code at the same speed it's produced. Second, intelligent deployment gates that use ML to predict integration risks rather than manual approval chains. Third, continuous learning loops where production behavior informs development priorities automatically.

Most teams are treating AI tools as productivity enhancers for old workflows instead of catalysts for workflow replacement. This compounds technical debt because you're accelerating code production without accelerating validation, creating a growing gap between what's shipped and what's actually verified.

When traditional workflows still make sense

Not every team should rebuild immediately. Highly regulated industries with 20-year compliance requirements may need human-in-the-loop validation. Systems with decades-long lifespans benefit from slower, more deliberate development cycles. Teams under 10 engineers often lack the scale where workflow bottlenecks matter yet.

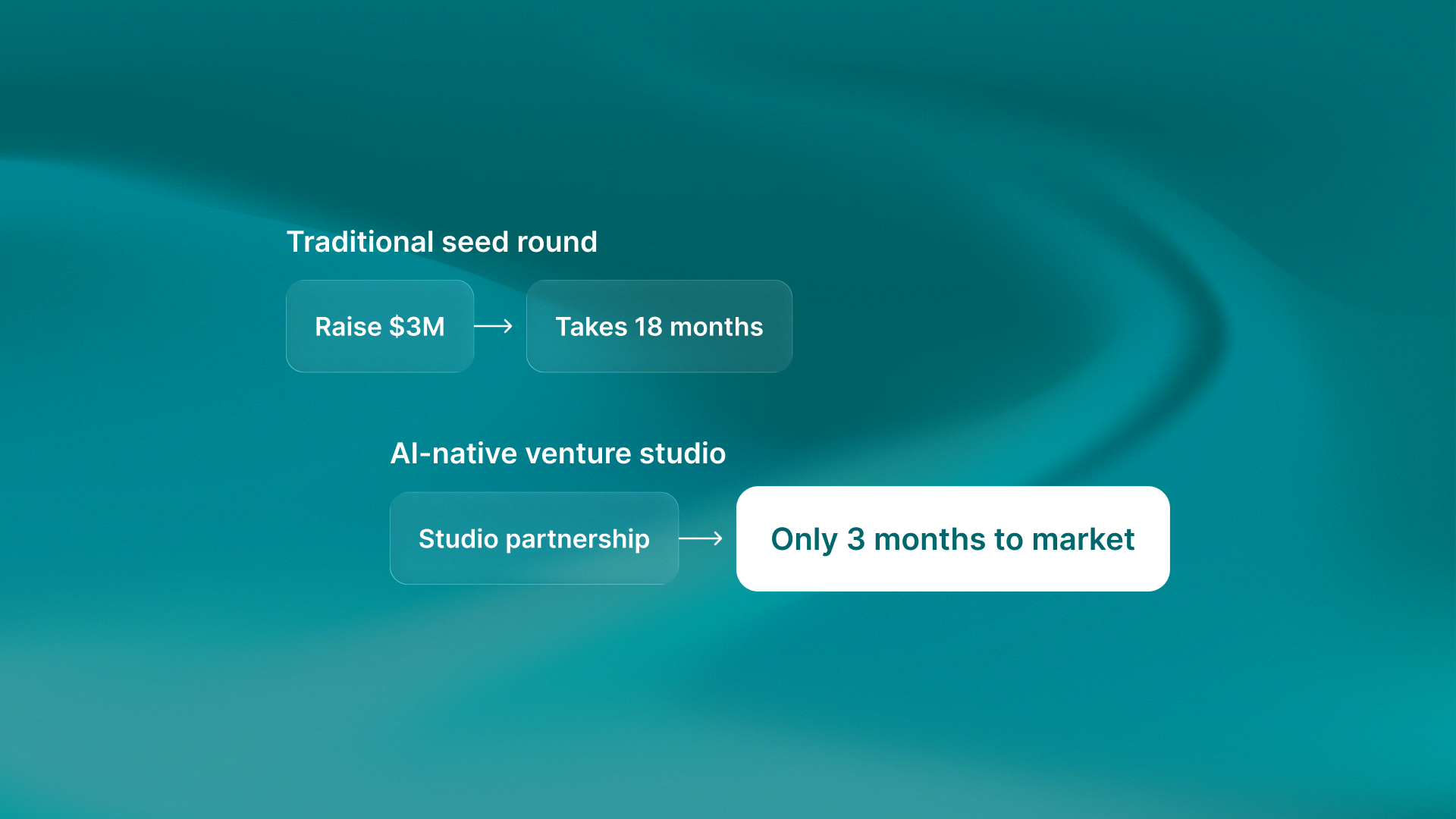

But for Series B+ startups shipping weekly: the window is closing. Teams that reshape their development lifecycle around AI workflows in 2026 will gain an 18 to 24 month edge. This advantage will compound before AI workflows become table stakes. The shift from human-paced to AI-paced development isn't optional anymore.

.png)

.svg)

.svg)